Data Annotation is the Fuel of Machine Learning and Deep Learning

Just looking back to 2017, when we embarked in the transition of all our statistical models to neural networks-based models… and I cannot fail to amaze myself seeing how we have moved beyond the talk of Artificial Intelligence (AI) being science fiction to AI being nowadays almost everywhere.

Intelligent applications can already be seen everyday in our smartphones, their apps, and increasingly in fintech products and new financial products, cars, and of course intelligently targeted marketing campaigns, life sciences and, thanks to deep Natural Language Processing, many business decisions. In fact, the talk is increasingly happening about ethical issues and responsible AI, as well as making AI more inclusive to other languages.

Read More: Are Japanese, Spanish, French foreign languages for artificial intelligence?

I was lucky enough to present Pangeanic’s developments and disseminate our participation in several EU NLP projects this week as part of ELRC_Spain2021. It was extremely refreshing to meet (online) so many like-minded professionals in the NLP space. And of course, the point of data annotation was raised and all the efforts by several European projects to produce more and more open data, anonymization services for public administrations.

AI must be the most quoted acronym on the internet … it is everywhere but the promise of a good-working, bespoke, self-learning and automated AI use case seldom comes alive because of one short and sexy word… data!

The World of High-Quality Training Data

Some translation companies hit it lucky some years ago when they found that large language datasets were being requested to build a wide range of AI applications. But not all translation companies saw these, and not everywhere. Data-for-AI providers need a problem-solving mindset. They need to understand that as pictures, speech and text data are collected, they need to be annotated by humans. Once so, data is fed through algorithms that produce AI models. There are also a number of successful start-ups that aim to become the next oil firms of the 21st century. Yes, data is the new fuel. It is the fuel that will cut costs in an order of magnitude of 10 so we will pay $/€1 for our cars, smartphones (if they still exist in 10 years), services, etc., where we now pay $/€100.

But, what’s better … AI will enable us to do things 100 times better than we do now. It’s a win. For most of us, this demand has been client-driven, and we have learnt a thing or two on the way.

Machine Learning (ML) and Deep Learning (DL) systems require large amounts of data to continuously improve and learn. It is a science based on pattern recognition (the name used before AI became so popular). Algorithms need data as fuel to identify patterns and trends, predict outcomes, categorize and classify data and so on. Tasks that take us hours or days, or need teams of people or we simply can’t handle, but that algorithms do very efficiently — like a mass-produced Enigma machine. Nevertheless, what is even more critical than the amount of data is the quality of the data. Put some “noise”, or “dirt” in the system, feed bias in the shape of too many masculine adjectives, too few pictures of one kind or another, don’t run proper QA before delivery and you’ve just wasted hours, thousands of $/€ to guarantee yourself low performance, inaccurate AI results.

So, how do you ensure good-quality data? This is a typical question that happens when data availability and volumes have not been part of the initial discussion when kicking off an AI project. Any company of any size needs to start designing its own data strategy in the 21st century. IBM has been pretty successful at using its own data to create several solutions. However, the collection and gathering of data the cleansing and the annotation need to be part of the plan before any AI project starts. And of course, finding the right partners for the journey. Again, because of its distributed production system and semi-crowd management, language companies have adapted pretty well to the needs of the new data market. But sooner or later, they have all learnt that they require data scientists, and that data annotation is key for the whole process.

What is Data Annotation?

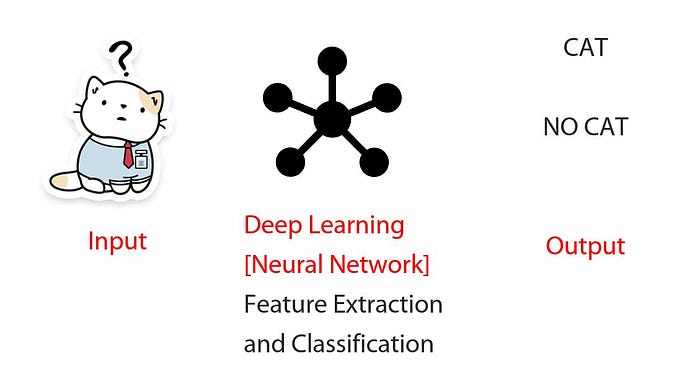

Data Annotation is the process whereby which we enrich our raw data by labeling it with “classification” information. If you are not familiar with the difference between Machine Learning and Deep Learning, let’s simplify it by saying that in Machine Learning you “label” all the pictures of cats on one side and dogs on the other for the system to learn whereas in Deep Learning models use different layers to learn and discover insights from the data. Deep Learning is a subset of Machine Learning.

Some popular applications of deep learning are machine translation systems (we know a bit about that at Pangeanic), natural language processing, document classification, etc.and/or objects of our texts, images, videos and audio. Labels provide additional information to the system so they actually make our data smarter and our models are capable of understanding deeper relationships between the classes to fully understand their meaning (for example that the picture is a cat and not a young lion cub, that “Paris” is usually a location, but the name of a person in certain cases, etc.)

We are now getting closer to the way our own brains work… by references and by association.

Types of annotation

So, we now see that annotation can take different shapes. Annotation processes will depend on the problem we are focusing on and the type of data available. The four most common data types for annotation are:

1. text,

2. images,

3. audio and

4. video

Different strategies are required when tackling each one of them. Different annotation strategies, different tools and different skills and humans may be required for each process. The goal is that the ML/DL algorithms will learn from human experience, imitate our reasoning and produce “decisions” and “insights” at scale. But how we do that… is the for the next article!